Thermal Design for Embedded Computers

As designs get smaller, power densities at all packaging levels increase dramatically. Removing heat is critical to the operation and long-term reliability of electronics. Component temperatures within the specification are the universal criteria used to determine the thermal design for embedded computers. Cooling solutions directly add weight, volume, and cost to the product, without delivery any functional benefit. What they provide is reliability. Without cooling, many electronic products would fail in a matter of minutes. Leakage current, and this leakage power, goes up with smaller die-level feature sizes. Because leakage is temperature-dependent, thermal design is more important. Cooling solutions have been implemented in embedded computers resulting in fanless embedded computes.

Electronics cooling, or thermal design, is part of the product creation process. Some organizations consider thermal design to be part of the mechanical design of a product. This is most common in traditional industries such as automotive, where the electronics content of a product has, until quite recently, increased slowly over time.

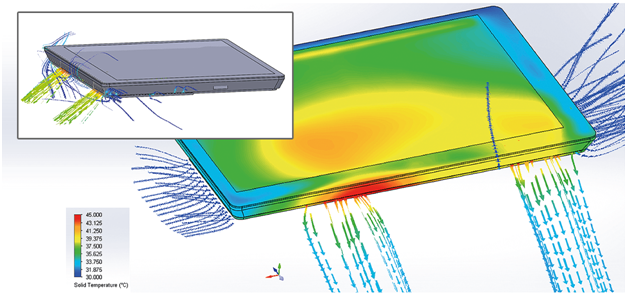

Engineers and the design environment can influence how thermal design is implemented. Both the type of product being developed and the volume in which it will be produced, also have an influence. In traditional industries where computational fluid dynamics (CFD) are used to investigate product performance (e.g. aerospace, nuclear, and automotive), design times are relatively long and safety and reliability are prioritized over increasing product lifetime by reducing component temperatures by some safety margin. The effort is spent on building redundancy into the cooling system so that, if a fan fails, the system can still operate well within specification and the fan can be replaced while the system is in operation.

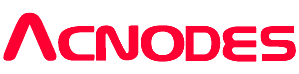

Minimization of electronic products leads to increasingly cluttered and complex geometries, with ever-tighter integration of the electronic and mechanical aspects of a product. One upshot of product miniaturization is that the flow spaces are reduced, often limiting the scope for convective cooling. These small spaces cause the flow to laminarize, with turbulence levels dominated by wall-generated shear. This actually reduces the numerical demands on capturing the effects of turbulence. Over time, the temperature rise within the air has a diminishing contribution to the temperature rise of the junction within the IC package above ambient.

Miniaturization also affects the choice of cooling technology. Years ago, the limited space in laptops gave rise to the use of centrifugal fans instead of the traditional axial fans used in desktops, and heat-pipes were used to transport heat from the centrally-located CPU to a finned section of the heat-pipe downstream of the centrifugal fan which exhausted directly to the ambient environment. Heat spreaders and gap pads also find use in space-constrained devices, as are synthetic jets, particularly for LED lighting applications.

Keeping the thermal model up-to-date with changes in the design flow is critical for making timely decisions, avoiding design rework, and achieving faster time-to-market. Beyond geometry, thermal simulation requires other information, such as the thermal data on materials used in the product, and the power consumption of components. Electronics cooling models are unique because of the number of “boundary conditions” that need to be implemented. In addition to the geometry, boundary conditions include material data, thermal attributes, surface properties including roughness, mesh requirements, and, in case of fans, performance data and built-in behavioral models. This ability to store everything in a single part greatly reduces the time needed to build a model.

In addition to providing a method for easily developing models of new innovative designs, electronics-cooling tools also need to handle those parts of a design that get reused, like a chassis. It should be easy to plus a new board into an existing chassis. This process is greatly enhanced with an efficient library.

An important aspect of the thermal design is to determine which uncertainties in the model have the greatest effect on critical component temperatures. Once these have been assessed, efforts can be directed toward improving those aspects of the design, both through design changes and by obtaining more accurate data for the simulation. The current state-of-the-art methodology is to use measurement to underpin the simulation process. As such, customers have been able to significantly reduce the time required to close the thermal design, reduce the cost of the thermal design effort, and achieve a model fidelity that can predict temperature rises above ambient. This inverts the traditional process of using physical prototyping after the design is complete to correct design mistakes.